The reasoning is simple. I don't want our services to be publicly accessible; however, our office needs access to those services. The services I'm talking about include git, chef, apt, jenkins, and more.

These services are not the only issue. Imagine a problem in production that requires manual debugging. I would have to tunnel through the NAT instance manually just to debug the problem server. When I'm having any issue in production, the last thing I want is an extra step.

VPC Supported VPN

At first, I tried to use the ipsec VPN that is offered by AWS. The cost is negligible at $36/month. The benefits include fault tolerance, automatic configuration, and hardware support for Cisco, Asa, and a bunch of other routers. Two hours later, I was no closer to having a working VPN.

Lucidchart is a small, growing startup. I came in one Saturday and setup the network, cat5 cables, wifi, internal DNS, DHCP, and OpenVPN. We don't have thousands of dollars to spend on fancy equipment and Cisco routers. I fashioned the custom router out of an old desktop nobody was using. I'm leading to the fact that none of the configurations AWS offered worked on my custom router.

OpenVPN Strategies

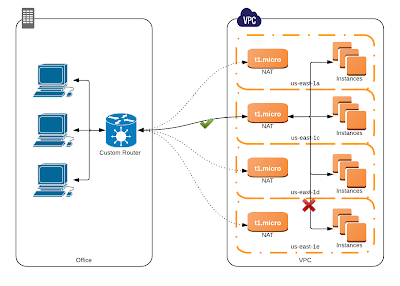

Having spent the two hours in vain, I chose to setup my own VPN using a tool I was already familiar with - OpenVPN. Immediately, two different propositions arose in my mind. First, a single VPN connection between our office and the NAT instances (load balanced for fault tolerance). Second, four VPN connections, one between the office and each NAT.

I'll discuss both strategies in some detail, but it should be noted that I implemented and tested both, and both work great under normal circumstances. I'll compare both strategies during normal use, in an entire AZ outage, and in a network outage between AZs. These scenarios gave me my basis for choosing the strategy for Lucidchart.

The single "load balanced" connection in OpenVPN is not load balanced at all. Basically, on failure, the client will just reconnect to a different server. See the images and captions for failure details.

|

| Only one connection is active at a time. All traffic between the office and the VPC will go over this single connection. |

|

| During a network outage between AZs, the client will not reconnect to any other server, so you will be unable to reach some of the AZs in your VPC. |

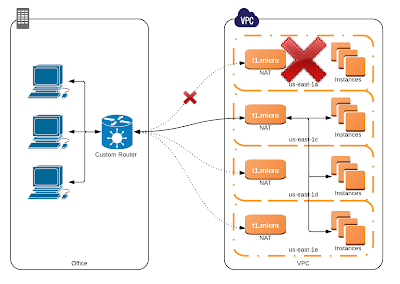

In comparison, the multiple connection strategy has one connection per AZ. Only packets for IPs found within the private and public subnets of an AZ will be routed to the NAT associated with that AZ.

|

| Each NAT handles traffic for its own AZ. The office router routes traffic to a specific NAT. |

|

| During an entire AZ failure, only the connection associated with that AZ goes down. All of the other connections stay up. |

|

| During a network outage between AZs, the VPN is unharmed, because there is no cross-AZ traffic. |

I hope it's clear to you why I chose the second strategy, 4 separate connections. There is a tiny amount more setup, but the difference is literally 5 minutes of work. One could argue that this way has more moving parts. One could also argue that a car has more moving parts than a unicycle; that doesn't mean you'd ride a unicycle 30 miles to work.

Getting Started

If either of these work for you, and you want to get started, I highly recommend reading the well-written documentation of how to use OpenVPN. That, and about 30 minutes of configuration, is all it took for me to get everything working.

Update: I've posted a tutorial, including configuration files, for OpenVPN on AWS VPC.

Thanks, this and the follow-on tutorial were most helpful! Thank you and thanks LucidChart!

ReplyDeleteGreat tutorial.

ReplyDeleteSome questions.

1) I've never really heard of "between AZ" network issues, while they are reachable from outside just fine? Did this happen before?

2) Do you still connect AZs with each other? e.g, VPN server instance dies in us-east-1a, you lose connection to all servers behind it which work fine, would you still be able to connect to them through another VPN in another AZ(e.g. us-east-1b)?

1) Yes, I have had a case where AWS zones cannot establish connections to eachother.

Delete2) Because of the way I've setup the VPN, the only way I would be unable to connect to an AZ is if the AZ is down. If it is down, then there's no sense in connecting to it. If the vpn server dies in us-east-1a, I have a couple options. I could bring up a new one within 3 minutes because we have configuration management with Chef. I could change my network routes to go through a different tunnel. I could ssh to another vpn server, and ssh to a us-east-1a machine. It would be annoying, but I have plenty of options to get around a downed server. Without intervention, though, no. I could not connect to servers behind that VPN.